Introduction to Surveillance Architecture: An Examination of Law Enforcement Technology

Over the past decade, American law enforcement has quietly assembled one of the most extensive surveillance networks in democratic history. Through automated license plate readers, gunshot detection systems, and predictive policing algorithms, police departments have created infrastructure capable of tracking millions of citizens without warrants, judicial oversight, or meaningful public debate.

Automated License Plate Readers (ALPRs)

Automated License Plate Reader (ALPR) systems capture images of every vehicle passing fixed cameras or mobile units, recording license plates along with time, location, and vehicle characteristics. Companies like Flock Safety and Vigilant Solutions have transformed these local tools into nationwide tracking networks through data-sharing agreements with thousands of law enforcement agencies.

Gunshot Detection Systems

ShotSpotter (now SoundThinking) markets its acoustic sensors as precision tools for detecting gunfire. The company claims 97% accuracy and promises faster police response to shootings. By 2022, over 130 cities had deployed the system, often using federal COVID relief funds to cover the substantial costs.

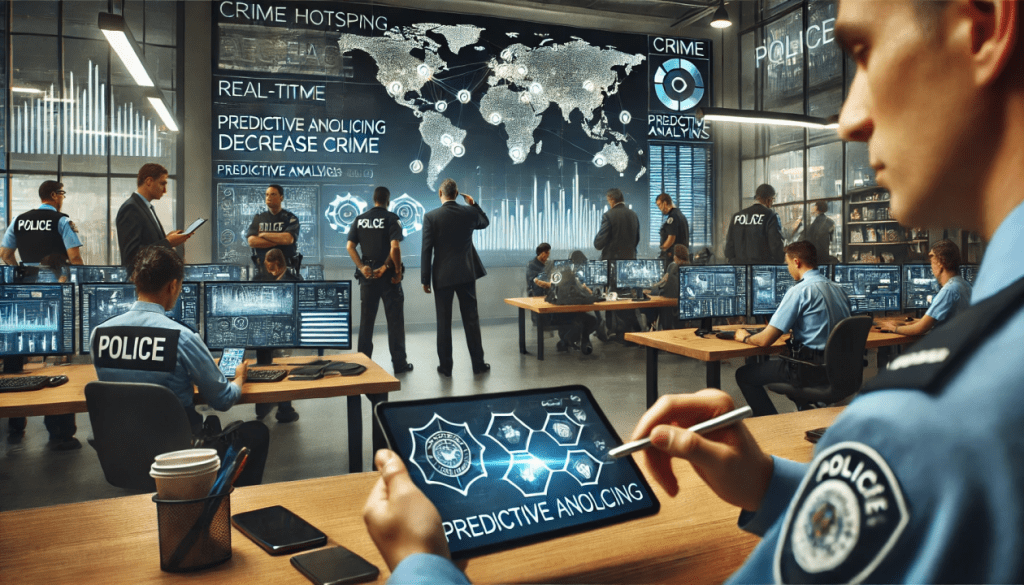

Predictive Policing Algorithms

Companies like PredPol (now Geolitica) promised to revolutionize law enforcement by predicting where crimes would occur. Using historical crime data and earthquake prediction models, these algorithms generated maps showing areas where police should focus patrols. The appeal was obvious: mathematical precision applied to public safety.

Addressing Critical Challenges Within Surveillance Systems

This analysis examines how these technologies function operationally, the entities that control and profit from them, and the empirical record of what they actually accomplish. Drawing upon government audits, court filings, and public records obtained through Freedom of Information Act requests, the evidence reveals a consistent pattern: surveillance systems that promise surgical precision but deliver mass monitoring, that assert effectiveness while producing demonstrably minimal results, and that operate with insufficient accountability despite consuming substantial taxpayer resources. The findings presented here warrant serious consideration from policymakers, civil liberties advocates, legal scholars, and the communities most directly affected by these technologies.

Automated License Plate Recognition

The scale of this infrastructure demands serious attention. By 2025, Flock Safety operated camera networks feeding a centralized database accessible to more than 7,000 agencies nationwide.

Predictive Policing Algorithms

These systems processed historical arrest records, incident reports, and related data to produce, inaccurately, probability maps that informed patrol deployment decisions.

Gunshot Detection Systems

The company claims a 97 percent accuracy rate.The findings in my research were striking: only 9.1 percent of these deployments resulted in any evidence of actual gun-related criminal activity.

Civil Liberties Safeguards

Contracts between law enforcement agencies and surveillance technology vendors frequently incorporate confidentiality provisions that materially limit public oversight capacity.

Explore Modern Surveillance Systems

The surveillance technologies examined here exist within a structured ecosystem of public-private partnerships that creates institutional incentives misaligned with public accountability. Private companies develop and actively market surveillance systems to police departments, frequently offering introductory deployments at reduced costs to establish market presence and operational dependencies. Once agencies integrate these technologies into standard operating procedures, the switching costs both financial and institutional make it politically and administratively difficult to discontinue even demonstrably ineffective systems.

Automated License Plate Readers

Automated License Plate Reader (ALPR) systems function by capturing photographic images of every vehicle passing fixed cameras or mobile units mounted on police cruisers. Each capture records the license plate number alongside time stamps, geographic coordinates, and vehicle characteristics..

Gunshot Detection Systems

ShotSpotter, now operating under the corporate name SoundThinking, markets its acoustic sensor technology as a precision instrument for real-time gunfire detection. The company claims a 97 percent accuracy rate and asserts that its technology enables faster, more targeted police response to shooting incidents. By 2022, more than 130 municipalities had deployed the system, with many leveraging federal American Rescue Plan Act funds to finance the substantial installation and subscription costs.

Predictive Policing Algorithms

By 2016, twenty of the nation’s fifty largest municipal police forces had deployed predictive policing software. These systems processed historical arrest records, incident reports, and related data to produce probability maps that informed patrol deployment decisions. Police departments invested millions of dollars in these systems, with many initial deployments financed through federal grants administered by the Department of Justice and the Department of Homeland Security.

Comprehensive Analysis of Surveillance Metrics

The accuracy and integrity of surveillance technology data requires sustained independent scrutiny. ShotSpotter’s documented false-alert rate raises serious questions about how frequently acoustic detection errors trigger police actions that harm uninvolved civilians. ALPR systems introduce additional error sources through misread plates and outdated database information, creating potential for wrongful stops and detentions. Companies have demonstrated little voluntary transparency regarding error rates or remediation procedures, making the case for mandatory independent auditing clear.

145

Automated License Plate Readers

Captures the deployment rate and accuracy of license plate recognition systems nationwide.

275

Gunshot Detection Systems

Details coverage and response times of acoustic gunfire detection networks in urban areas.

320

Predictive Policing Algorithms

Evaluates algorithmic impact on crime prediction and resource allocation effectiveness.

380

Civil Liberties Impact

Assesses privacy concerns and legal challenges associated with surveillance technologies.

Insights from Policy Experts

These technologies persist not primarily because of demonstrated effectiveness, but because of institutional momentum, sustained vendor lobbying, federal funding incentives, and the enduring appeal of technological responses to complex social challenges. The evidence base assembled through government audits, litigation, and public records advocacy provides a foundation for the rigorous policy reassessment these systems require.

The growing skepticism from affected communities, civil liberties organizations, independent oversight bodies, and an increasing number of legislators and jurists suggests that a meaningful policy inflection point may be approaching. The surveillance infrastructure constructed over the past decade is not immutable. Dismantling it and replacing it with governance frameworks that genuinely balance public safety and civil liberties will require sustained community engagement, continued investigative rigor, and political leadership with the institutional courage to demand that law enforcement technology serve constitutional values rather than circumvent them.

The comprehensive report offered vital clarity on automated license plate readers, enhancing public understanding of surveillance practices.

Dr. Lisa Hammond

Senior Privacy Analyst

The detailed examination of gunshot detection systems provided a balanced view of benefits and civil rights concerns.

Professor Michael Chen

Criminology Researcher

The study of predictive policing algorithms revealed critical insights into algorithmic fairness and community impact.

Angela Martinez

Civil Liberties Advocate

Margin of the Law has a Newsletter.

You know you want to, and besides, it’s free.

Subscribe to our newsletter below for weekly analysis updates and special reports.