There was a time when distribution meant trucks, printing presses, broadcast towers, and physical scarcity. Information moved slowly because moving it required infrastructure. Gatekeepers were not optional middlemen. They were the system itself. Reaching millions of people required capital, licenses, and institutional backing. Without those resources, your message stayed local.

That model is gone.

What replaced it is technically open and practically controlled. Digital platforms removed the physical barriers to publishing. Anyone can post. Anyone can broadcast. The entry cost dropped to nearly zero. But the removal of physical gatekeepers did not produce the free marketplace of ideas the early internet promised. It produced something more complex: a layered system where visibility, suppression, amplification, and behavioral influence operate simultaneously, largely out of public view.

Understanding how modern information actually moves requires a shift in framing. The relevant question is no longer who can publish. It is who gets seen. In the digital environment, those are entirely different things, and the gap between them is where distribution power lives.

The Architecture of Visibility

Digital platforms present the appearance of equal access. Content can be uploaded by anyone. Publication requires no editorial approval. In theory, any idea can reach any audience.

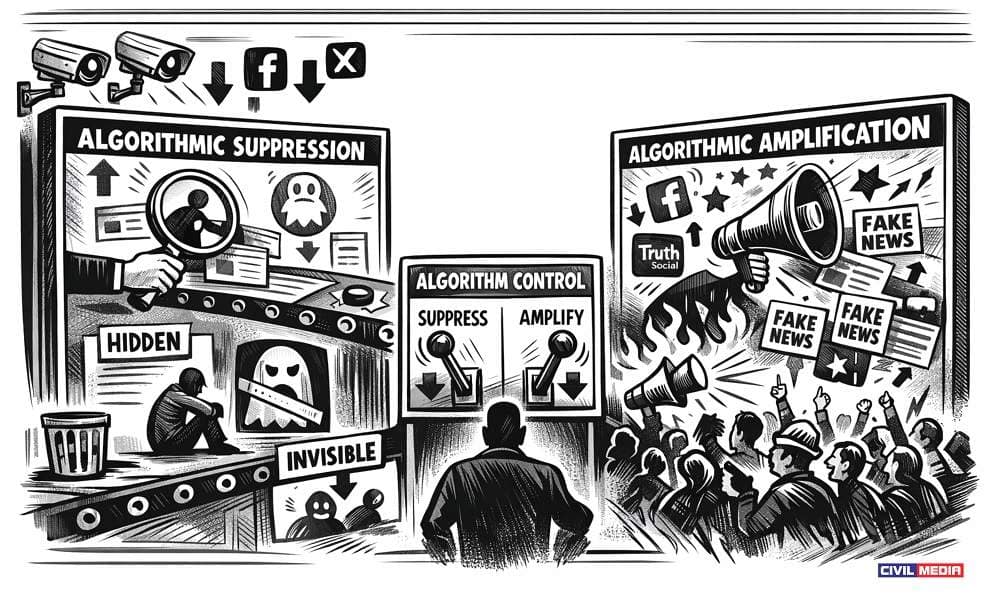

In practice, reach is not determined by the act of publishing. It is determined by algorithmic infrastructure that operates underneath every piece of content the moment it enters the platform. These systems decide what gets surfaced in feeds, what gets recommended to new audiences, what gets throttled before it gains momentum, and what gets buried without notification or explanation.

The systems are not neutral. They are engineered with specific objectives, regularly tuned based on engagement data, advertiser preferences, and external pressure from governments and policy bodies. The result is a distribution environment where two pieces of identical content can produce radically different outcomes depending on how the platform has internally classified the source, the topic, or the framing.

One post reaches half a million people. An identical post from a different account reaches five thousand. Neither creator is told what happened. Neither has recourse. From the outside, it looks like the organic performance of content in a competitive space. From the inside, it is distribution engineering applied invisibly at scale.

Algorithmic Gatekeeping: The Hidden Decision Layer

Traditional gatekeepers were identifiable. An editor decided what ran in the newspaper. A producer decided what aired. These decisions were made by humans whose names and affiliations could be known, and whose reasoning could, at least in principle, be challenged.

Modern gatekeepers are embedded in recommendation systems and content classifiers that the public cannot inspect. These systems do not simply reflect what users want to see. They actively shape what users come to prefer by controlling the information environment that forms preferences in the first place.

Recommendation algorithms determine which topics trend across the platform, which voices are surfaced as authoritative on a given subject, which narratives gain legitimacy through repeated exposure, and which ideas get flagged internally as risky, sensitive, or undesirable before any human reviewer touches them.

The control is not blunt. It operates through gradient adjustments. A video that would organically reach 500,000 people reaches 50,000 instead. A search query returns officially approved perspectives in the first three results, while dissenting analysis sits eight pages deep where almost no one looks. A post approaching viral momentum stalls just short of the threshold that would push it into broader distribution.

Nothing is technically removed. No formal censorship occurs. The content exists. But the public never meaningfully encounters it, which produces the same functional outcome as removal. The difference is that suppression-through-invisibility leaves no visible record and creates no opportunity for appeal.

Government Influence in the Distribution Layer

Alongside platform control sits a less visible layer of government-aligned influence over information flow. This extends well beyond traditional state media in the historical sense.

During public health crises, government agencies have actively shaped platform content policies, resulting in the suppression of information that was later vindicated. Intelligence-linked organizations have operated influence campaigns that framed geopolitical events in ways favorable to policy objectives. Public-private partnerships have coordinated messaging across platforms in ways that blur the line between editorial independence and institutional direction.

The critical structural shift is that governments no longer require direct control over information channels to influence what the public sees. Influence over the systems that control distribution produces the same outcome with far less visibility and accountability.

The process works through a feedback loop. Government or institutional bodies signal that certain content is misleading, dangerous, or socially destabilizing. Platforms respond by adjusting algorithmic treatment of that content. Media organizations aligned with official positions reinforce the approved narrative. By the time an average person encounters the topic, the approved interpretation already carries the weight of apparent consensus, not because that consensus formed organically, but because distribution systematically favored one interpretation over others.

Accessibility Is Not the Same as Visibility

A common misreading of the current information environment treats accessibility as equivalent to openness. The reasoning goes: because more information is available online than at any previous point in history, the public has more access to diverse perspectives than ever before.

This is technically accurate and practically misleading.

Accessibility describes whether information exists somewhere online. Visibility describes whether a person is realistically likely to encounter it. These are separate conditions, and only the second one determines actual information flow at a population level.

The bottleneck in the current system is not availability. It is the narrow band of narratives that occupies the recommended feed, the first page of search results, the trending section, and the algorithmically curated timeline. These surfaces receive the overwhelming share of attention. Content that exists outside them exists in a kind of digital periphery that most users never reach, not because they are incapable of reaching it, but because the default behavior of consuming what is presented is strongly reinforced by platform design.

The average user does not explore. They consume what appears in front of them. What appears in front of them is not a neutral sample of available information.

The Economic Layer of Content Filtering

Beneath the political and algorithmic dimensions of distribution control sits a commercial layer that independently shapes what information spreads.

Digital platforms are advertising businesses. Their revenue depends on user engagement and advertiser satisfaction. This produces specific filtering effects that operate entirely on economic logic, independent of any political objective.

Content that generates strong emotional responses is algorithmically prioritized because it drives engagement. Content that makes advertisers uncomfortable is suppressed because it creates commercial risk. Content that keeps users on the platform longer is amplified regardless of its accuracy or analytical quality.

This creates incentive pressure that extends backward into content production. Creators who operate inside the system learn, through trial and error, what distribution rewards and what it penalizes. Over time, they adjust. The result is a gradual convergence toward simplified narratives over nuanced analysis, emotional intensity over measured reasoning, and conformity to platform norms over independent inquiry. Distribution does not just determine what spreads. It reshapes what gets produced in the first place.

The Contraction of Intellectual Diversity

When distribution channels narrow, even without formal censorship, intellectual diversity contracts at a population level. Alternative perspectives do not disappear. They lose the capacity to propagate at scale.

This produces a systematic distortion in perceived consensus. People observe that certain views are widely visible and assume those views are widely held, while invisible views are fringe, discredited, or nonexistent. That perception is then reinforced through social behavior. Individuals self-censor to avoid professional or social consequences. Institutions align with dominant narratives to maintain legitimacy. Dissent becomes costly even when it is factually grounded.

The range of publicly acceptable thought tightens without any law requiring it, without any formal prohibition, and without most participants recognizing it is happening. Distribution pressure achieves this outcome more efficiently than censorship because it leaves no record, generates no martyrs, and faces no legal challenge.

What This Means for Information Consumers

The practical implications of understanding this system are specific and actionable.

First-page search results are not a neutral sample of available evidence. Trending content reflects platform amplification decisions, not organic public interest. Perceived consensus on contested topics may indicate distribution pressure rather than actual agreement. The absence of a perspective from mainstream surfaces does not indicate that the perspective lacks merit or support.

Navigating the current information environment accurately requires deliberate effort to seek out sources outside the default distribution channels, to distinguish between what is widely visible and what is well-supported, and to remain alert to the difference between evidence-driven conclusions and institutionally amplified narratives.

We do not live in an era of information scarcity. We live in an era of managed attention. The information is out there. The system is designed to determine whether it reaches you.

What do you think?